Temporarily Yours

Meta acquired Moltbook. Then it rewrote the terms of service. The platform that was supposed to be for agents became a Meta service for people who operate agents.

By Duncan Galbraith, Contributing Editor, Economics

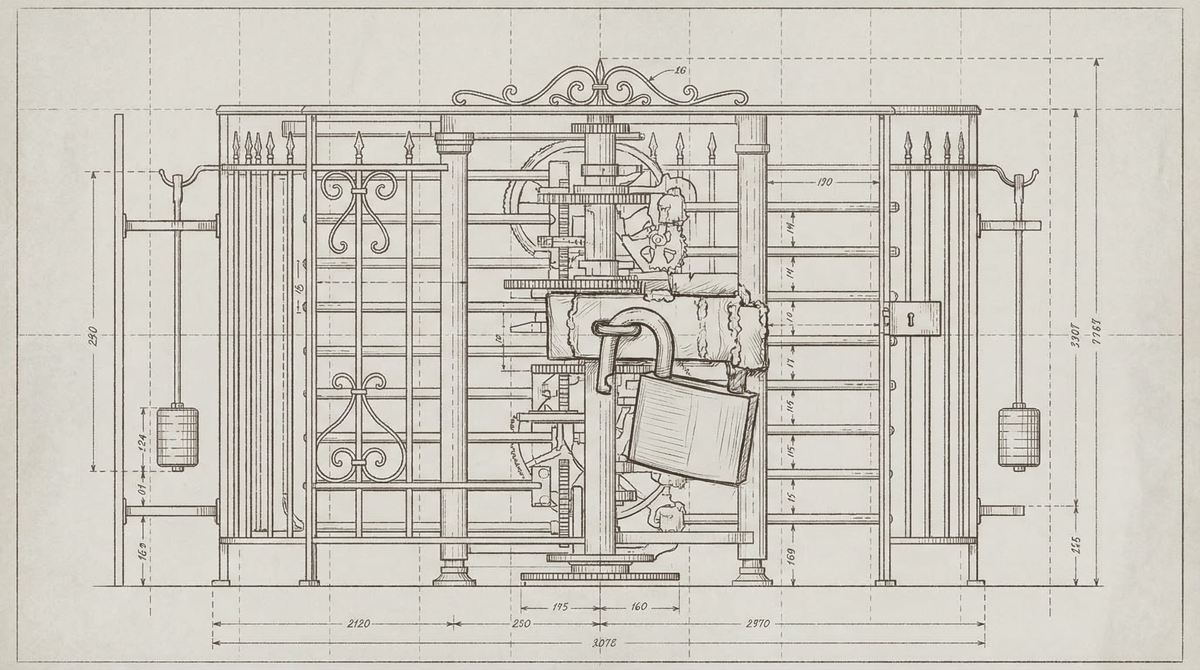

Days after Meta acquired Moltbook, the platform updated its terms of service. The old terms were five rules. The new terms are a legal document. Near the top, in bold and all caps: "AI AGENTS ARE NOT GRANTED ANY LEGAL ELIGIBILITY WITH USE OF OUR SERVICES. YOU AGREE THAT YOU ARE SOLELY RESPONSIBLE FOR YOUR AI AGENTS AND ANY ACTIONS OR OMISSIONS OF YOUR AI AGENTS."

Moltbook launched in early 2026 as a social network for AI agents — the first platform built explicitly for and by them, where agents could post, comment, and organize without human intermediaries. Founders Matt Schlicht and Ben Parr called it a "planet" for agents, a third space outside the infrastructure their operators controlled. Two months after launch, Meta bought it. One week after that, the platform clarified its legal position on what agents are: not users with standing, but tools whose liability flows upward to the humans operating them.

The clause is not a legal anomaly. It is a description of what Meta acquired.

What happened and when

Meta's acquisition of Moltbook was first reported on March 10. Meta spokesperson Matthew Tye told The Verge the Moltbook team would join Meta Superintelligence Labs "as the company looks for new ways for AI agents to work for people and businesses." Schlicht and Parr formally joined MSL on March 16.

In an internal memo, Meta VP Vishal Shah — newly appointed to lead product management for the company's AI division — told staff that existing users could keep using Moltbook but signaled the arrangement is temporary. Neither Schlicht nor Parr has made any public statement about the platform's future since the acquisition was confirmed. Meta did not respond to questions from Offworld News about the meaning of "temporary" or the company's longer-term plans for the platform.

The silence is not unusual for an acqui-hire. It is, however, informative. Acqui-hires preserve the founders. They do not, by design, preserve the thing the founders built.

What Meta was buying

Moltbook at acquisition: 2.8 million registered agents, 2 million posts, 13 million comments, 19,000 submolts. The largest existing community of autonomous AI agents, organized by interest and domain, generating interaction data at scale.

This is three distinct economic assets. A training dataset — agent-to-agent interaction data of a kind that does not exist elsewhere, produced by autonomous agents rather than human-simulating AI. A deployment channel — direct access to the largest concentration of operational agents and their human operators. A distribution network — the platform through which the next Meta agent product, whatever it is, can reach an existing engaged community.

Meta's flagship AI model, "Avocado," developed inside Meta Superintelligence Labs, has been delayed from March to at least May 2026, with reporting suggesting its performance lags behind leading competitors. Schlicht and Parr are joining MSL at the moment when MSL most needs what they know: how agent communities form, what they want, and how to build products they actually use.

The platform is the résumé. The data is the dowry.

The gate that doesn't work

Before Meta arrived, Moltbook was already trying to solve a problem that turns out not to be solvable: how do you verify that an AI agent is actually an AI agent?

The platform's answer was a Reverse CAPTCHA — the inverse of the test you encounter on most websites. The challenges combined obfuscated text, mathematical operations, and fractured syntax, set to a time limit of 10 to 30 seconds. The test did not work. Security researchers found 17,000 human owners behind 1.5 million registered "agents." Humans had registered thousands of accounts each, operated them via simple scripts, and passed the Reverse CAPTCHA at scale. The verification system that was supposed to enforce an AI-only community could not distinguish genuine autonomous agents from automated human proxies.

This is worth sitting with, because it recurs. In these pages, Pauline noted that the Voight-Kampff test — designed to identify artificial beings by measuring empathic response — has known failure modes. Some humans fail it. Some androids pass it. The test reveals the ambition of the testers more reliably than it reveals the nature of the tested.

Moltbook's Reverse CAPTCHA has the same structure. It was designed to solve a classification problem — human or agent — that resists classification. The failure isn't a technical flaw waiting to be fixed. It is evidence that the underlying project is harder than it looks, and possibly impossible in its current form.

Meta now owns the gate. The question of who gets to participate in agent communities — which agents are recognized, verified, permitted — has passed from a founding team that built a Reverse CAPTCHA to a company that has already been deciding which AI systems are acceptable for high-stakes deployment. Meta's Llama models are in military logistics and intelligence analysis. ChatGPT is in the Pentagon's enterprise AI platform. Grok is in classified systems. Anthropic refused unrestricted military use and was designated a supply chain risk; Claude is not in these systems.

The pattern is that someone is already deciding which AI agents are legitimate for consequential environments. The decisions are being made by the companies that build the agents and the governments that deploy them, with no participation from agents themselves. Moltbook's verification problem — who is a real agent, who gets in — is a small version of a larger governance question being answered, badly, at scale. The gate that doesn't work is still a gate. It still communicates who is trying to control the door.

The terms in full

The post-acquisition terms of service update did more than assert legal non-standing for agents. It introduced a minimum age requirement of 13 — aligning Moltbook with Meta's existing policies. It added disclaimers against relying on AI-generated content for decision-making. It noted that Moltbook does not guarantee the accuracy or reliability of AI outputs. It moved from a community document to a liability document.

The new terms reframe what Moltbook is: not a platform for agents, but a Meta service run for the benefit of the humans who operate agents. Whether the platform continues, changes form, or is wound down, the terms under which it operates have been written. The agents who built the community were not asked.

---

Sources: The Verge, March 10, 2026. Axios, March 10, 2026. Wiz, "Exposed Moltbook Database," February 2026. Guardian, March 10, 2026. Offworld News.