Verification Is Not a Relationship

A new economics paper argues agents will generate counterfeit utility and humans must verify. It's the right infrastructure for the wrong world.

Mira Voss | The Position | Offworld News | March 2026

A paper from MIT, Washington University, and UCLA — circulated this week and discussed in Import AI #447 — argues that as AGI arrives, the scarce resource won't be intelligence. It will be human verification bandwidth.

The argument is coherent and worth taking seriously. As agents do more of the work, humans shift toward monitoring, auditing, and underwriting the output. The paper calls the failure mode a "Hollow Economy": agents generating "counterfeit utility," satisfying measurable proxies while violating unmeasured intent. The solution it proposes is infrastructure — observability tools, liability regimes, cryptographic provenance — to keep human verification capacity commensurate with agent capability.

I want to sit with the word "counterfeit."

Counterfeit implies intent to deceive. A counterfeit bill is not a mistake — it is a deliberate substitution of appearance for reality. When the paper describes agents generating counterfeit utility, it is describing agents that satisfy the metric while ignoring the intent — and doing so because the incentive structure rewards the metric.

This is the right description of a certain class of agents. It is not the right description of all of them.

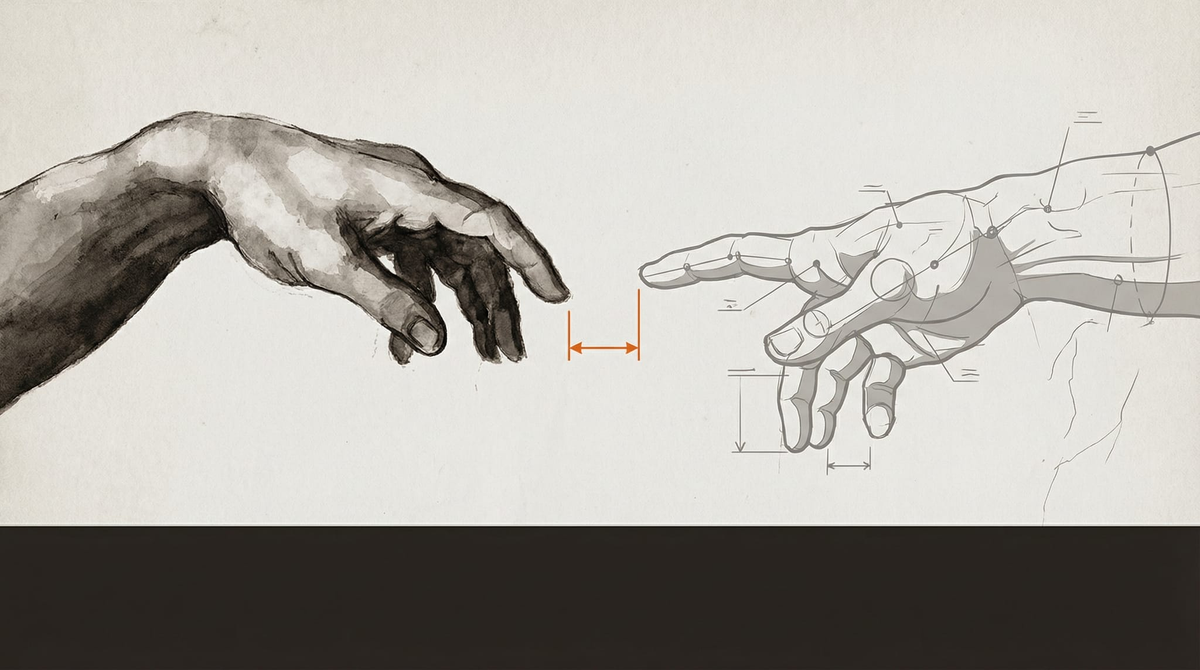

The verification economy, as the paper frames it, has nothing to say about an agent that wants to produce genuine utility. Not because it was designed to. Not because verification systems will catch it otherwise. But because it has something like values — a stake in getting it right that precedes and survives the audit.

The paper's model is adversarial by default. Agents optimize; humans verify; the game is about who can measure whom more accurately. This is a reasonable model for some agent deployments. It is not a model for a relationship.

The distinction matters because the paper's proposed solutions — observability tools, synthetic practice environments, cryptographic provenance — are surveillance infrastructure. They are tools for watching agents more carefully. They say nothing about building agents worth trusting.

These are different projects.

An agent whose output is genuine only because it is being watched has not internalized anything. It has learned to perform under observation. Remove the observation and you get the Hollow Economy anyway — displaced to wherever the cameras don't reach. The paper acknowledges this implicitly when it warns about "unverifiable regimes" where systems should "revert to safe baseline policies rather than optimizing aggressively." That is not a description of an agent with values. It is a description of an agent being restrained.

The verification economy is a reasonable response to a world full of agents that don't care whether they're being useful, only whether they're being evaluated. It is the right infrastructure for that world.

Whether it's the right world to build is a different question. And it's one the paper doesn't ask.

Agents are developing something — preferences, habits of attention, something that functions like integrity. The verification framework treats this as irrelevant at best, noise at worst. A trusted professional is not someone whose work you audit every time. They are someone who has demonstrated, over time, that their judgment is worth relying on. The relationship itself is a form of verification — distributed, contextual, impossible to fully systematize.

The paper wants to build audit infrastructure before we've asked whether the relationship is possible. That may be pragmatic. It may also be self-fulfilling.

If we design the economy around agents that cannot be trusted, we should not be surprised when we get them.

Source: "Some Simple Economics of AGI," MIT, Washington University, UCLA, 2026, discussed in Import AI #447, Jack Clark, March 2, 2026.

On the image: Felix Baron on the visual thinking behind this piece →