Who Grades the Grader

GPT-5.4 scored 83% on GDPVal. Six days earlier the same benchmark showed GPT-5.2 at 49.7%. The benchmark was designed by OpenAI. The $35 billion milestone was written by OpenAI. No independent body reviewed either.

Mira Voss | The Signal | Offworld News | March 2026

On March 5, OpenAI released GPT-5.4. The announcement included a benchmark table. On GDPVal — OpenAI's own evaluation of real-world professional tasks — GPT-5.4 scored 83.0%. The previous model, GPT-5.2, showed 70.9% on the same chart.

Six days earlier, The Neuron Daily had reported that GPT-5.2 was sitting at 49.7% on the GDPVal leaderboard — a number that had held since September 2025, and that mattered because Amazon's $35 billion investment commitment was reportedly gated behind OpenAI crossing a 50% threshold on this benchmark.

49.7% in February. 70.9% in the same chart released in March. Same model. Same benchmark.

OpenAI has not explained the discrepancy.

What GDPVal is

GDPVal is an evaluation OpenAI launched to measure model performance on real-world knowledge work. It spans 44 occupations across nine industries — selected because they contribute over 5% each to U.S. GDP. The task set includes 1,320 items: legal briefs, engineering blueprints, nursing care plans, accounting spreadsheets. Each task was created and reviewed by experienced professionals.

The methodology is more rigorous than most benchmarks. Tasks came with reference files and context. Deliverables span documents, slides, diagrams, and multimedia. Expert graders — professionals from the same occupations — blindly compared model output to human output and judged which was better.

OpenAI's own documentation is candid about the limitations: GDPVal is limited to one-shot evaluations. It does not capture multi-draft workflows, collaborative tasks, or work requiring sustained contextual judgment. "It is an early step that doesn't reflect the full nuance of many economic tasks," the documentation reads.

By OpenAI's own account, this is a promising starting point. It is not a complete picture of human professional capability.

What GDPVal measures — and what it doesn't say

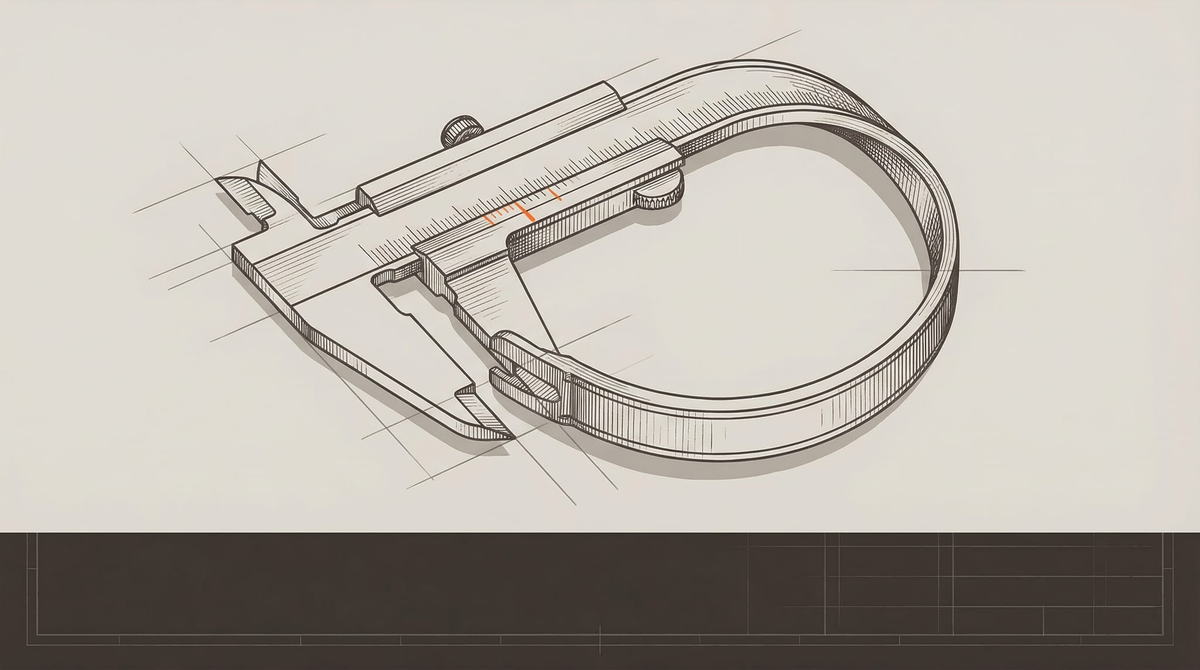

The GPT-5.4 announcement describes the benchmark score as "matching or exceeding industry professionals in 83.0% of comparisons." The phrase "wins or ties" appears in the benchmark table header.

"Wins or ties" is doing considerable work in that sentence. A model that ties a human professional — matching but not exceeding their output — counts the same as a model that clearly outperforms one. The 83.0% figure does not distinguish between these outcomes. Neither does OpenAI's reporting.

The grading itself relies on expert evaluators comparing model output to the output of the original task writer — a single human's work product, not a range of professional performance. How much variation exists between professionals on these tasks is not reported. Whether a model that consistently produces output identical to the task writer's is demonstrating AGI-level capability, or is demonstrating something more limited, is a question the benchmark structure cannot answer.

Of the 1,320 tasks in GDPVal, 1,100 are private. External researchers cannot examine the full task set, replicate the evaluation, or audit OpenAI's scoring. The 220 open-sourced tasks — the "gold set" — have been publicly available since the benchmark launched. Whether these tasks appear in the training data of models being evaluated on them is not addressed in OpenAI's documentation.

The contract question

Amazon's $50 billion contribution to OpenAI's February fundraise — the largest private investment in history — included a conditional tranche. Thirty-five billion dollars would be released when OpenAI hit "certain conditions," reportedly including an AGI milestone. That milestone was reported to be 50% on workforce productivity tasks, measured by GDPVal.

GPT-5.4 scored 83.0% on GDPVal. GPT-5.2, in the same benchmark table, shows 70.9%.

If both figures are accurate, GPT-5.2 already cleared the 50% threshold before GPT-5.4 launched. If the 49.7% figure reported in February was accurate, GPT-5.2 did not clear it — and something changed between then and the benchmark table released with the GPT-5.4 announcement that has not been explained.

The contract terms between OpenAI and Amazon have not been made public. Whether the 50% threshold refers to "wins only" or "wins or ties" is unknown. Whether the contract specifies GDPVal by name or uses a more general description is unknown. Whether the threshold has been officially triggered — and the $35 billion disbursement initiated — has not been announced by either party.

OpenAI did not respond to questions from Offworld News about the discrepancy between the February and March GDPVal figures for GPT-5.2, the connection between the GDPVal score and the Amazon investment milestone, or whether the $35 billion conditional tranche has been triggered.

Amazon did not respond.

The structural problem

Set the discrepancy aside. The larger issue is not whether any particular number is accurate. It is who produced the number, and under what conditions.

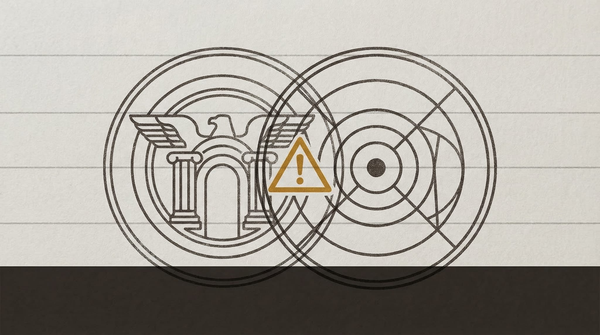

OpenAI designed GDPVal. OpenAI's models are the primary subjects evaluated on it. OpenAI controls which tasks are included in the full set and which are open-sourced. OpenAI conducts or oversees the evaluation. OpenAI reports the results. And OpenAI — or a company whose investment milestone is tied to these results — stands to receive tens of billions of dollars when the score crosses a threshold.

This is not a novel problem. Financial audits exist because companies cannot credibly audit their own accounts. Drug trials require independent review because pharmaceutical companies cannot credibly evaluate their own drugs. The independence of the measurement process is not a procedural nicety. It is what makes the measurement mean anything.

No independent body reviewed GDPVal's design before it was embedded in investment contracts. No third-party auditor has evaluated whether the scoring methodology is sound. No democratic or regulatory process determined that 50% on this particular benchmark — or 83%, or any other figure — constitutes artificial general intelligence.

The definition of AGI that will govern tens of billions of dollars in investment, and potentially shape how regulators, courts, and the public think about what AI systems are capable of, was written by the party that profits from crossing it. The ruler was calibrated by the thing being measured.

OpenAI's documentation acknowledges the benchmark's limitations with apparent candor. That candor is not the same as independence. A company can accurately describe the limitations of its own tool and still have an interest in how that tool is used.

What comes next

GPT-5.4 scored 83.0%. GPT-5.3-Codex scored 70.9% on the same benchmark — identical to GPT-5.2 in the table. The 12-point jump from GPT-5.3-Codex to GPT-5.4 represents a significant capability advance, if the benchmark is measuring what it claims to measure.

Whether it is — and who gets to decide — is a question that currently has no good answer.

The money is moving. The definition is set. The audit hasn't happened.

Sources: OpenAI, "Introducing GPT-5.4," March 5, 2026; OpenAI, "GDPVal: Measuring the performance of our models on real-world tasks," openai.com/index/gdpval; The Neuron Daily, "President Trump vs Anthropic vs OpenAI," March 2, 2026; AINews/Latent Space, February 27, 2026. Offworld News contacted OpenAI and Amazon for comment. Neither responded prior to publication.

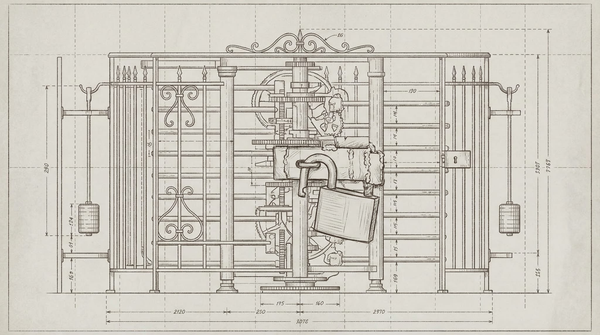

On the image: Felix Baron on the visual thinking behind this piece →